Context engineering beats prompt engineering

👋 Hey and welcome to technical-ish — the newsletter for the kind-of-technical-but-not-really crowd.

Every two weeks: practical ways to use AI in your work, no coding required. Just real workflows, tools that actually help, and experiments I've run so you don't have to.

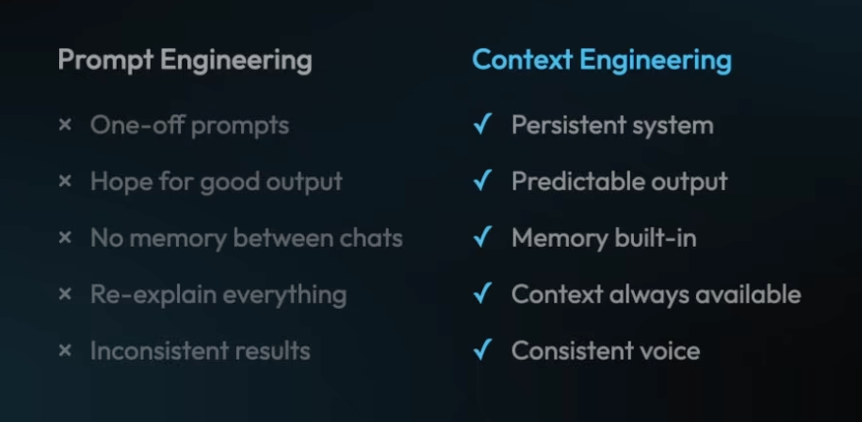

In spite of what many creators out there might tell you, the quality of your AI output has nothing to do with how you prompt it. And everything to do with how you provide context (and whether you provide it once, systematically, or start from scratch every time). This is what I learned after moving from ChatGPT's chat interface to Claude Code.

This article argues that obsessing over prompting is overrated. The real leverage is in setting up reusable context for your projects.

The chat UI and its limits

Most of us, especially non-technical people, got into AI through the chat interface brought to us initially by ChatGPT. The sleek text box became the default way of interacting with AI.

As simple as it may be, it has its limitations.

- One-off prompts, not workflows

The chat gives you an answer, not a finished task. You still have to copy the output into a CMS, a slide deck, a Notion page, or an Excel sheet and stitch formatting, links, and brand voice back in. That connector work (moving and reshaping AI output) remains human and is where most of the time goes. - Prompts that don’t persist

Most useful prompts are used once and forgotten. Even when you save a prompt, you rarely grab it from the library and reuse it. (Same here). The result is prompt-roulette: you quickly draft or dictate something and hope that the output will be good. - No true cross-session memory

Models only see what you feed them in the current session (or within whatever context window you provide). What vendors call “Memory” is input that gets reread again and again at every session. They are not memories in the sense we (humans) have them (capable of being retrieved on demand by context and relevance). So unless you systematically surface the same context every time, outputs will drift.

The pains and the failed fixes

This has consequences both on the producing and on the consuming side of things.

On the producing side of things, I was getting frustrated with the results: generic, inconsistent between sessions, and simply bah. Prompt and pray is not a scalable strategy.

On the consuming side: a big chunk of the content posted online is now generated by AI. This isn't inherently bad – unless the content is bad. I started developing a sense of "this was so written by AI" together with "this sounds like the others I just read". If it all starts to sound too familiar and similar, it is just a waste of time to engage. It is a pity if we let our tics and quirks be watered down by AI. These are precisely what make the world colorful and special.

There are attempts within the chat UI to deal with this, but they are all insufficient:

- prompt the prompt (ask a model to generate the prompt for you): handy for big one‑offs, but not a reliable strategy for every interaction.

- Projects: better but fragile. Format, file quality, and ingestion matter, and large uploads can be partially ignored or inconsistently used.

- Custom GPTs/Gems: useful but they can drift, be susceptible to prompt injection, or become a black box when shared.